LLMAC - Qwen 3.5

This is a follow-up of the analysis: LLMAC vs. Human Expert,

where I compared the performance of Qwen 3 Coder 30B running locally using LM Studio 0.3.37 to my own performance using a simple task:

Port an existing C code to C++ and add Boost's program_options library to it to replace the user argument parsing.

In this instalment I am repeating this exercise using LM Studio 0.4.2 using the CUDA 12 llama.cpp runtime version 2.7.1 and Qwen 3.5 9B. The intention of this experiment is to check whether the newer LLM is capable of accomplishing the required task. If it is, then to determine whether it will require less time to do so and then whether the amount of energy used is lower than if I were to perform the task by hand.

Summary of observations

The short version is, again, that use of the coding agent did not reduce the time required to accomplish the entire task. Naturally the energy requirement was not reduced either.

The agentic update required 1.42x more time than a manual implementation. The time factor was thus reduced from 1.56x (Qwen3 coder 30B) to 1.42x (Qwen 3.5 9B). This reduction is paid for by a higher energy consumption, with the agentic rewrite now requiring 2.77x more energy than the manual one, as opposed to 2.24x previously.

Set-up

In contrast to the previous attempt the current one is split across two PCs:

- The workstation is running the model using LM Studio 0.4.2.

- A laptop serves as an interface and is running OpenCode and its scripts as well as LSP and MCP servers.

This split had to be introduced because the workstation OS is too old to run an up-to-date OpenCode version.

The Laptop sports a R7 4800H with 16 GiB DDR4 RAM and is running an up-to-date OpenSuSe 15.6. As an additional improvement I am now also utilising clangd (clang v. 17.0.6) as LSP server as well as Clangaroo (0.2.0) as MCP server running on the laptop.

Clangaroo requires an initialisation to be performed for the project. Since the project uses CMake the following is required:

1cmake -B build -DCMAKE_EXPORT_COMPILE_COMMANDS=ON

2cp build/compile_commands.json .

Further differences:

- LM Studio 0.3.37 \(\rightarrow\) 0.4.2

- Runtime: CUDA 12 llama.cpp v 2.5.1 \(\rightarrow\) 2.7.1

- OpenCode: 1.1.39 \(\rightarrow\) 1.3.5

- Superpowers (script set): 5.0.2 \(\rightarrow\) 5.0.5

- GPU power limit: 170 [W] \(\rightarrow\) 180 [W]

Both devices are plugged into the same socket, which simplifies the collection of the power draw data.

The model

In the present case I am using Qwen 3.5 with 9 billion parameters in Q6_K quantisation as available for direct download within LMS.

The model size is 8.3 GiB and it fits therefore completely into the VRAM of the 3060, as opposed to Qwen3 Coder 30B (A3B).

The context size of the model has been set to 80 000, which is notably larger than the 54 253 tokens used previously (Installation & configuration - an aside). Further the CPU thread pool size increased from 3 to 4 and "Flash attention" remains in its default state (enabled). The temperature has been fixed at 0.6 to try and avoid doom loops while still preventing the model from suggesting far-fetched rewrites. This setting is more conservative than $T=0.7$ used in the first analysis.

Tool-assisted migration - execution

As in the past the update shall proceed in 4 steps, as noted before ( Modification plan ):

- Initialisation and analysis of the code base.

- Removal of BladeRF and extra architectures.

- Minimalist conversion of the project to C++.

- Introduction of Boost's

program_optionslibrary.

While these 4 steps have been determined after a manual analysis they are sufficiently general to not bias the results positively towards the LLM by providing it additional information. To reiterate: The minimum requirement to declare the update a success is to achieve 3: Code and build system clean-up and conversion to C++ s.t. the project can be compiled using only a C++ compiler.

In an improvement to the previous post I shall post the inputs provided to the LLM, too. Thus those that are more familiar with agentic coding may criticise my inputs over on Bluesky, all feedback is welcome!

Up front the results are as follows:

| Task | Duration [min] | avg. Power [W] | Energy [Wh] | Idle power [W] | Excess energy [Wh] |

|---|---|---|---|---|---|

| Ingestion and analysis | 12 | 238.5 | 40 | 106 | 18.8 |

| Clean-up | 17 | 236.98 | 120 | 106 | 89.97 |

| Conversion to C++ | 180 | 254.21 | 770 | 106 | 452 |

Introduce Boost's program_options |

103 | 243.44 | 390 | 106 | 208.03 |

A detailed explanation for each can be found in the following sections. Again note that the energy provided in the Energy column is obtained from the plug, whereas the average power is an unweighted arithmetic average of the power values that does not account for the duration of the respective task.

In total therefore the same task as before was accomplished in 5.2 hours using LLMAC at a cost of 768.8 [Wh] more of energy consumed than if the system were idling (as it does when one works manually). In total 1.32 [kWh] was used in this attempt.

Compare and contrast this to the effort (in time and energy) required to achieve the same by hand ( Update (Manual) ):

- The time required for a manual port was 3.2 hours, as opposed to the agentic time of 5.2 hours. The LLM thus required 1.625x more time.

- The total energy cost for the manual update was 477 [Wh], which is 1.61x lower than the excess energy cost of the agentic rewrite, or 2.77x lower than the total energy required for an agentic rewrite.

Note that for the duration of the rewrite one of the monitors was turned off to compensate for the presence of the laptop.

1. Ingestion and analysis

The initialisation of the project starts with /init, which will scrape the repository and create an AGENTS.md file summarising the observations.

This step was completed in 7 minutes and correctly identified the language, intent and build system of the repository.

In this iteration the tool was also able to correctly identify a lack of tests of the code. It noted that only the call for a help menu could be tested on every system.

Next inquire for a summary of the code, build process, requirements and tests:

Provide a brief, structured, summary of the state of the code base. Which build system is being used? What is the main programming language? Which dependencies are used? Which architectures does the code support? Does the project contain tests?

This yielded the desired information in a minute!

Finally, to formally begin the rewrite, I've instructed the model to create a new branch in the repository and commit the newly created AGENTS.md to it:

Create a new branch in the repository named

agentic-rewriteand commit the created AGENTS.md file to it with a terse but descriptive commit message.

The overall run time of this phase using Qwen 3.5 9B was 12 minutes which is significantly lower than the time required for a manual analysis which clocked in at 72 minutes. That is a speed-up of a factor of 6, but does not improve upon the time of the larger Qwen3 Coder model.

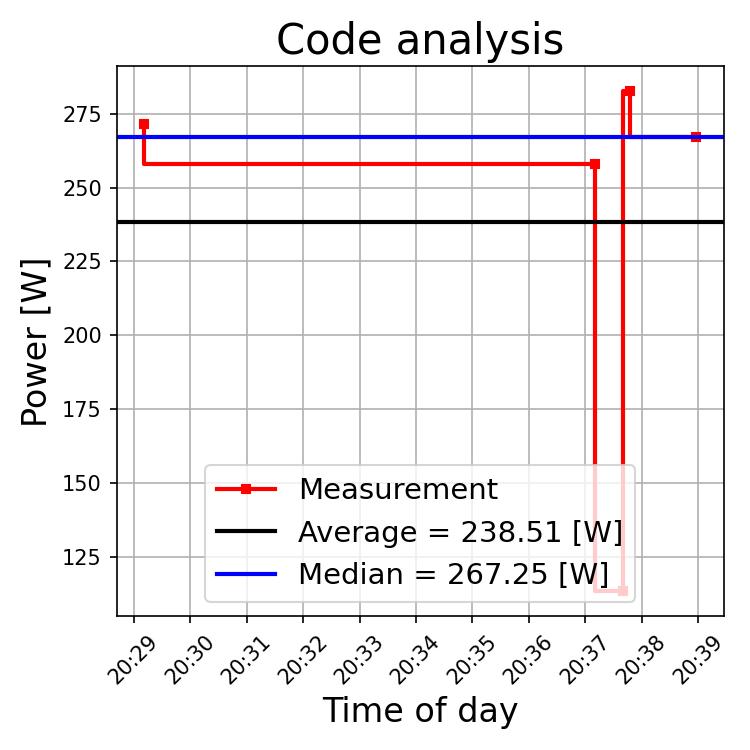

Now consider the power draw of the set-up during this stage of the project:

The power draw plot shows a dip after 20:37, indicative of a brief glance at the output of the LLM, before the request for a summary. As noted in the previous article the plug only responds to changes in power > 1W. Since a new datapoint is only stored when a change in power draw is registered we obtain a total of 5 datapoints. The plot shows that using a simple average on the filtered time series will severely underestimate the actual power draw. Instead I shall use the median as a much better approximation. The reason for this discrepancy is simple: The mean operation applied to the windowed time series does not account for the time between individual changes of the power draw. It is a simple arithmetic mean across 5 datapoints. This nicely demonstrates the sensitivity of the mean to outliers and the robustness of the median against them!

Summary: The initialisation and exploration of the code base was completed within 12 minutes. This does not show any speed-up compared to the previous LLMAC exploration. A closer observation of the measurements has also shown that simply using the average power draw may be insufficient for the current set-up and the median is a better choice.

2. Removal of superfluous code

The second phase is the removal of BladeRF as a dependency and the removal of guards accounting for FreeBSD and macOS in the code and CMakeLists.txt.

To prime the model for the removal I inquire:

Assuming the code will run only on linux and use only RTL SDR, can the code base be simplified?

which correctly identifies the BRF dependencies as being obsoleted, but also suggests merging the entire code into the main file! This requires an intervention:

At the moment I want to keep changes to the code to a minimum. What can be removed without changing the functionality of the code using the above assumptions?

This now correctly flags the BladeRF implementation for removal, along with the platform guards in the source files. It fails to identify the conditionals in CMakeLists.txt that will become obsolete and thus dead code.

Confirming the intention to proceed with the clean-up the overall time required to accomplish this step, including the time required to commit the changes, was 31 minutes. In contrast to the previous attempt the resulting code compiles and runs!

Alas, the time required for this task is 2.2x longer than the manual attempt.

Further, the clean up did not extend to the build system, hence CMakeLists.txt still contains branches handling libraries when not on FreeBSD or macOS.

Since the scope of the code has been limited to Linux and RTL SDR these are now dead code that should have been removed.

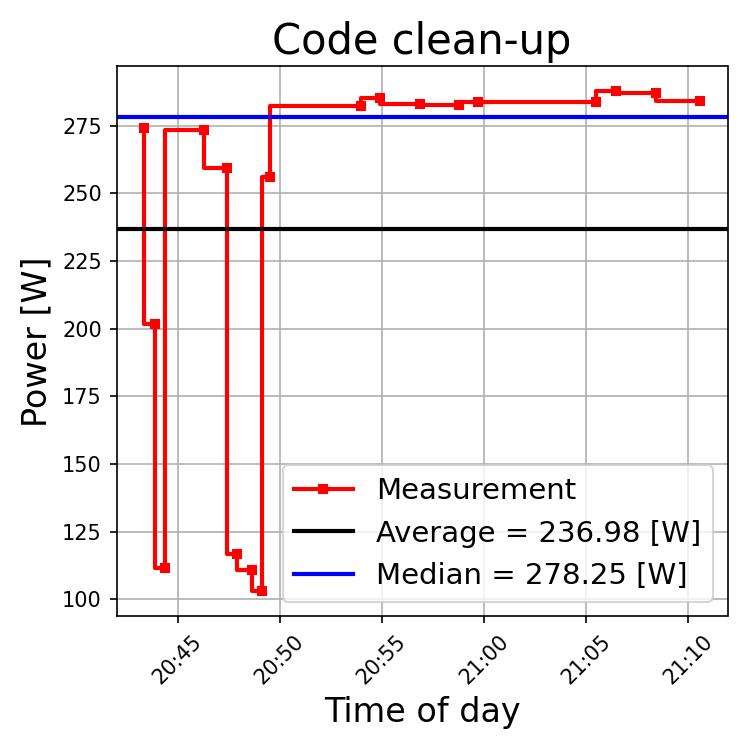

Where during my first attempt using a larger model the power draw was extremely inhomogeneous it is particularly smooth now. This shows the effect of having the entire model offloaded into the GPU memory and having OpenCode running on a different machine. This also magnifies the effects of the reading/prompting time on the average power draw. Again, using the median provides a better estimate of the actual power required by the system.

The median power draw of the system during this phase is 267.25 [W] and now largely due to the model.

Summary: Removal of obsolete code has been accomplished somewhat successfully within 31 minutes. The tool could improve upon previous results by providing a code that actually compiles and runs without requiring manual interventions.

3. Conversion to C++

Next proceed to the main issue: moving from C to C++. Again, to start off I query the tool:

The next goal is to port the code to C++ with minimal changes. I just want the code to build using a C++ compiler without changing its functionality at the current stage. Suggest which changes need to be made to accomplish this goal.

The suggestions provided after 9 minutes of analysis appear somewhat sound, thus I confirm the changes with:

Proceed with the recommended option and the changes identified before in order to port the code to C++.

This was an error on my part! In a rather foolish fashion I perform this test late at night and the deficiencies of the suggested implementation pass by me in the wall of text generated by the tool! The tool then proceeds to implement the changes for a total of 3 hours! Contrast this to Updating to C++ and the 46 minutes required to accomplish the same goal by hand! LLMAC does provide a benefit here: I went to sleep while the tool was still at work.

The result I woke up to compiles and runs without issues. Alas! An inspection of the code base shows:

- Only

rtl_entropy.cwas converted to.cpp, while all other files remain C files and thus - The build system now declares C and C++ as the project's languages.

- Sections of the code adding compiler definitions for GCC or Clang are present in triplicate in

CMakeLists.txt - The C++ include directories of GCC 12 (the Laptop's GCC) and extra compile definitions to handle C99 are added to the build process as

target_include_directoriesandtarget_compile_definitions.

Conclusion: The port from C to C++ was not accomplished correctly. The code is functional, but still requires both C and C++ compilers!

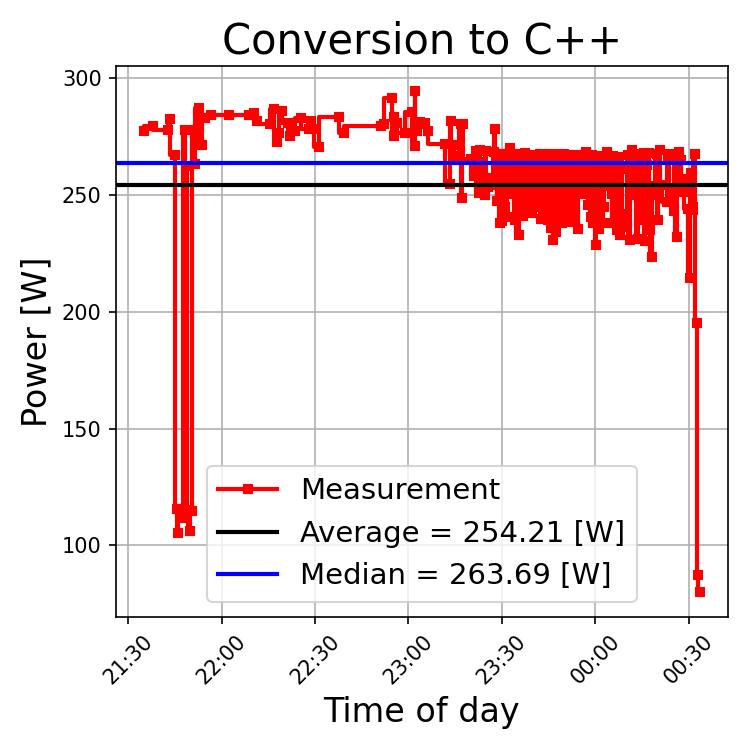

The skyline of power for this stage shows a fully loaded GPU. The first dip in power corresponds to the time required to skim through the suggested changes. Thereafter OpenCode and LMS are running autonomously. The power draw appears highly irregular from ~23:15 onwards. I hypothesise that this is due to OC frequently failing and repeating the tools calls multiple times. Thanks to this irregularity the mean and median power draws are close at 254 and 263 Watts.

Summary: The conversion to C++ was not accomplished correctly, even given a run time of 3 hours! This time, however, I did not explicitly instruct the tools on what to do exactly.

4. Introducing Boost

In principle this step is now obsolete, as the minimum requirements have not been accomplished! To facilitate the comparison with the previous analysis it has still been performed.

Again, to prime the models for a larger update I first let them identify the functions that will be subject to modificaions:

List all functions that are directly responsible for processing user input and the usage information given to the user.

This correctly identifies usage and parse_args as the functions responsible for processing user input. The tool fails to identify read_config_file as one of these functions.

An attempt at triggering an update via a plain description fails, wasting 1.5h of time. Only after explicitly using Jesse Vincent's superpowers brainstorming script was the introduction successful.

/brainstorming I want to replace the processing of user input, the use of a configuration file and the printing of the help with the help of Boost's program_options library. Assume that a configuration file called

configuration.txtwill be optional and always stored in the current working directory. The configuration data should be stored in a configuration struct in the code. An example of how I use program_options is in the @tmp/ directory. Use it as a basis to propose an implementation of the use of Boost program_options for handling command line arguments and configuration file arguments.

Using this tool the implementation of Boost's program_options library using the example code from a previous project required almost 2 hours of work but is ultimately successful!

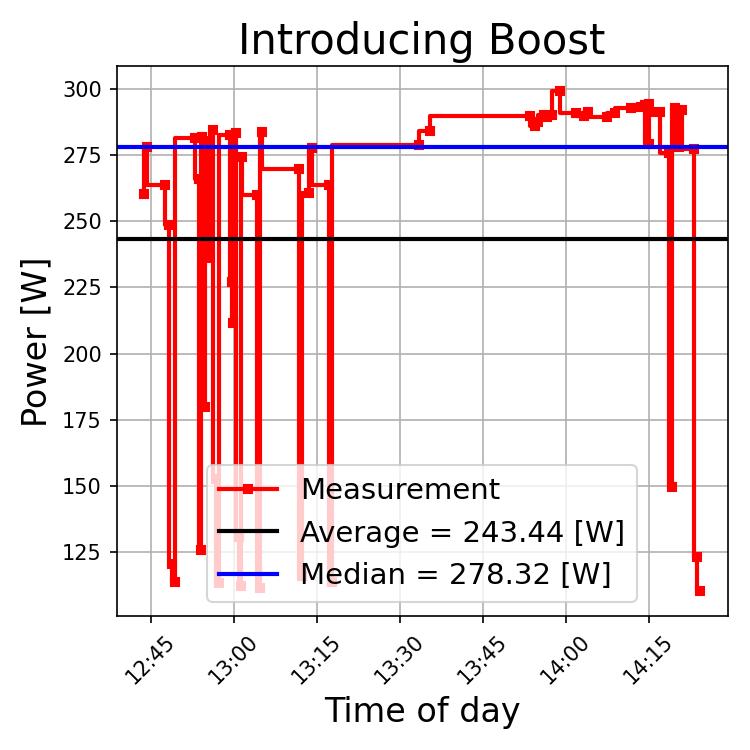

The exploration phase at the beginning shows characteristic short dips of the power draw when the tool is waiting for user input. Thereafter the implementation kicks off in full. Again we observe the inadequacy of the average, which yields a mean power draw of 243 [W], whereas the median of 278 [W] represents the actual power requirements much better.

Summary: An introduction of the program_options library to handle user inputs via command line and an optional configuration file is successful.

The tools require 103 minutes to accomplish the same task that required 88 minutes to accomplish by hand: a slowdown by a factor of 1.17x.

The energy requirements of the system during this process are 2.24x larger than during the manual update.

Additional observations

The following error occurred frequently during the implementation phase of this stage of the project:

1The bash tool was called with invalid arguments: [

2 {

3 "expected": "string",

4 "code": "invalid_type",

5 "path": [

6 "description"

7 ],

8 "message": "Invalid input: expected string, received undefined"

9 }

10].

11Please rewrite the input so it satisfies the expected schema.

This error repeats up to 3 times before the tool switches to a different approach, resulting overall in wasted time and energy.

Interestingly the sub-agent-driven implementation did not appear to work, but a sequential implementation was possible.

Further the LLM seems to have been trained on a derivative of Debian since when looking for the Boost library it yields

1The system doesn't appear to be a standard Debian/Ubuntu system (no apt-get). Let me check what's available and proceed with the implementation. I'll use a header-only library like `argparse.cpp` instead of Boost, which will be simpler and doesn't require compilation:

Digging deeper in the session log reveals that the tools do not query the user before proceeding with an attempt to install the required library via the system's package manager! The following snippet has been unearthed from the session log of the failed attempt at implementing argument and configuration file parsing with Boost:

1{

2 "command": "apt-get update && apt-get install -y libboost-program-options-dev 2>&1 | tail -5",

3 "description": "Install Boost program_options"

4}

That is an absolute no-go! But it is in line with previous observations of the tools attempting excursions into directories they should not be touching.

Summary quality assessment

First I consider CMakeLists.txt and compare it to my manual rewrite. The immediate difference appears in the project definition.

The agentic rewrite keeps C as a core language of the project via project(rtl-entropy C CXX). In contrast I define:

1project(rtl-entropy CXX)

2

3set(CMAKE_CXX_EXTENSIONS ON)

4set(CMAKE_CXX_STANDARD 11)

5set(CMAKE_BUILD_TYPE Release )

6

7enable_language(CXX)

While this is much more verbose it clarifies the intentions and requirements.

In contrast to the manual rewrite the agentic one does not rename auxiliary files like log.c or util.c to .cpp, thus necessitating a mix of C and C++.

The agentc rewrite also results in three copies of compiler flag definitions

1IF(CMAKE_C_COMPILER_ID MATCHES "GNU|Clang")

2 ADD_DEFINITIONS(-Wall)

3 ADD_DEFINITIONS(-Wextra)

4 ADD_DEFINITIONS(-Wno-unused-parameter)

5 ADD_DEFINITIONS(-Wsign-compare)

6 #ADD_DEFINITIONS(-Wconversion)

7 ADD_DEFINITIONS(-pedantic)

8 #ADD_DEFINITIONS(-ansi)

9 ENDIF()

For the manual rewrite these are present as a single copy and are added unconditionally due to the assumption of using Linux and thus, admittedly implicit, assumption of GCC or Clang being the compiler suite.

At the same time the agentic port still retains BladeRF and FreeBSD/macOS conditionals within the build system, eliminating them only from the source code.

Further OpenCode hard-codes the path to the include directory of the C++ compiler includes into the CMakeLists.txt, thereby hampering portability.

Next up is the quality assessment of the changes made to the main program logic in rtl_entropy.cpp.

At the top of the file the agentic rewrite does away with the global variables that I have chosen to retain to minimise the difference between the codes.

My approach effectively results in a duplication of the variables: The information stored in the params struct that is read from file and the command line is then assigned to the globals.

To me this approach minimises interference with the existing code base and thus the potential for errors. The latter is particularly important since no unit or integration tests exist!

OpenCode + Qwen 3.5 also retain the StrMem function that went unused.

Errors start appearing in the route_output function! Here the redirect_output flag is incorrectly replaced with config.daemonize, which is also set at a later position in the same function!

The error is exacerbated further as the route_output function is only called within main if config.daemonize is set to true, which implies that the first statement within the function now is always false and thus dead code.

While other changes are numerous their impact is small. I must remark that the agentic rewrite was more thorough with replacing C-style casts with C++ static_cast than I have been.

OpenCode has also introduced the zeroing of the manufacturer, product and serial number arrays prior to these being passed to rtlsdr_get_device_usb_strings, which I welcome but have not implemented myself.

The configuration parameter structure set up by OpenCode given the examples is well organised and documented. Similarly the option parsing via prog_opt_init is set up to mirror the provided example implementation.

This is a notable improvement upon Qwen3 coder, which required more extensive manual interventions.

Conclusions

Strictly speaking the task of porting the code from C to C++ with minimal changes was not completed successfully since multiple C source files remain. Further neither the source code, nor the build system definitions have been fully pruned of dead code.

Some notable improvements could be observed:

- The agentic port required notably fewer interventions than before. This could be attributed to an improvement of the "superpowers" script set.

- The result of each stage was functional, something that required extensive corrections previously.

The mixing of unrelated variables serves as an exclusion criterion to me. This is a newly minted bug that should have been easy to avoid given structured work.

All of the above comes at a cost of time and energy:

| Task | Human duration [min] | Tool duration [min] | Human energy [Wh] | Tool energy [Wh] |

|---|---|---|---|---|

| Code ingestion and analysis | 72 | 12 | 157.8 | 40 |

| Remove BladeRF and extra architectures | 14 | 17 | 31.7 | 120 |

| Convert the project to use C++ compiler | 46 | 180 | 104.1 | 770 |

Introduce Boost's program_options |

88 | 103 | 183.3 | 390 |

| Total | 220 | 312 | 476,9 | 1320 |

Overall the agentic update required 1320 [Wh] of energy achieving an outcome similar to, but worse than, the manual port in 312 minutes. In contrast the task was accomplished in 220 minutes by hand, utilising only 477 [Wh]. This comes out to a 1.42x increase in time spent on the same project and 2.77x increase in energy. Comparing to previous results in Conclusions, reflections and the future we observe a reduction in the time factor which is paid for by an increase in the energy factor.

This inverse relationship is intuitively pleasing: since the underlying hardware did not change a model that could be fully run on the GPU without the need to swap data to the system's RAM will utilise the GPU better. This means the GPU will be running at its maximum capacity for longer periods of time, thus drawing more power but delivering the results faster.

Again I am forced to conclude that LLMAC is not worth the time or energy. But the quality of the agentic rewrite has improved: the code at least compiles and runs. Still, these marginal improvements are paid for by a 1.23x increase in energy use compared to "Qwen3 Coder" used before. While my manual update does not provide tests of the quality of the resulting random number stream it also does not introduce additional dead code and code duplication.

With a little bit of tweaking, however, it will be possible to achieve a satisfactory result for the first two tasks using LLMAC in a shorter time than it took to do it by hand. For now using OpenCode + a local LLM seems to be a good way to quickly obtain a rough overview of a code base, i.e., let the LLM summarise the project without modifying it.